Pentagon Used Anthropic AI in Operation Against Maduro, Raising Questions About Multimillion-Dollar Contract

By Anastacio Alegría

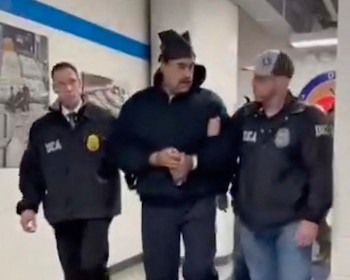

Washington, February 14 (IPU News) — The U.S. Department of Defense used the artificial intelligence model Claude, developed by Anthropic, during the military operation that captured former Venezuelan President Nicolás Maduro in Caracas in early January, according to sources familiar with the matter. (Reuters)

Claude's alleged involvement in the mission represents a milestone in the use of advanced AI tools in concrete military operations and has sparked tensions between the Pentagon and Anthropic, whose usage guidelines explicitly prohibit its technology from being used to facilitate violence, develop weapons, or conduct direct surveillance. ([Investing.com][2])

How Claude Was Used

According to reports, Claude was not only used in the planning phase, but also provided real-time support during the operation, through collaboration with Palantir Technologies, a company that provides secure data platforms for the Department of Defense and integrates AI models into classified networks not open to the general public. ([Investing.com][2])

While specific details of its role have not been disclosed, sources indicated that the model could have helped process and synthesize large volumes of information, such as signals intelligence, image analysis, and complex data during critical moments of the operation. ([Lapaas Voice][3])

Repercussions for Anthropic and the AI Industry

The report has generated concern within Anthropic and among AI ethics experts, as the company has positioned itself in the market as a champion of responsible and safe use of the technology, with strong restrictions on applications in violent or wartime contexts. The potential use of Claude in a high-risk operation raises questions about **how AI usage policies are interpreted and applied in classified military environments**. ([Investing.com][2])

The revelation has strained relations between the Pentagon and Anthropic and could influence the continuation of a contract valued at up to **$200 million**, signed last year to integrate AI technologies into defense and data analytics systems. (Fox News [4])

Broader Context of AI in Defense

The use of Claude in a military mission comes as the Pentagon pushes to integrate advanced AI tools into classified networks and sensitive operations, negotiating with technology providers to expand access to language models and data processing even in classified contexts. (Reuters [5])

At the same time, AI developers such as Anthropic have publicly expressed concern about unregulated applications of the technology in a military context, arguing that clearer legal and ethical frameworks are needed to prevent uses that could cause unintended harm. (Investing.com [2])

Representatives for the Department of Defense and Anthropic declined to comment specifically on the use of Claude in the operation or on the future of the contract, citing the classified nature of the discussions and the confidentiality of the agreements.

0 Comments